I get it now. Thanks.

Yes that should be easy to automate. Maybe a shell script or python?

You might need to feed it more than one logfile

How do you automate the checking?.. maybe feed the reply plus input to a different LLM.

My first attempt would be a Python script that is hard coded to include:

/var/log/auth.log

/var/log/ufw.log

/var/log/syslog

/var/log/journal/

/var/log/systemd/

Something like that.

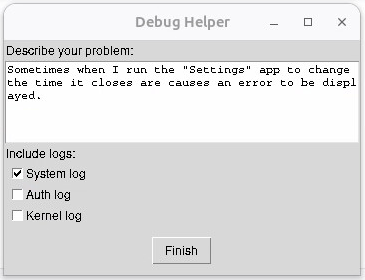

Maybe I launch it and it has checkboxes for several logs and I can include the ones I want and fill in a prompt describing the situation. I think I like the sound of that.

I could name the project “Ask Nev”. ![]()

You would not get much from me on logfiles.

I dont even know how ro read /var/log/journal… it is binary.

What I meant was… how do you validate the response?

Could you use something other than Copilot maybe?

There is an option in systemd to save the logs in a text format. I believe I read that somewhere on It’s Foss or Linux Handbook.

Looks like I misread that. I asked Copilot and it reminded me of what I read.

Short answer: No — systemd’s journal is always stored in a binary format under

/var/log/journal. However, you can configure journald to forward logs to syslog or export them in text, JSON, or other formats usingjournalctl.

That should do the trick.

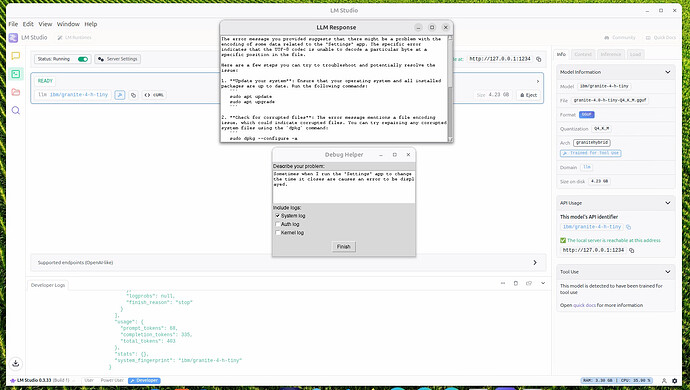

Ideally you could use any AI, and it could run locally. I thought I’d start by using LM Studio running on my own computer. I’ve been using the ibm/granite-4-h-tiny model. It’s pretty quick and light on resources but doesn’t seem quite as good with results as larger public models.

Using a public model would usually require a subscription and an API key. I like free for now.

This is looking interesting.

It is about time I tried one of those local AI’s

Here is the first run.

My prompt was:

Sometimes when I run the “Settings” app to change the time it closes are causes an error to be displayed.The answer was:

The error message you provided suggests that there might be a problem with the encoding of some data related to the “Settings” app. The specific error indicates that the UTF-8 codec is unable to decode a particular byte at a specific position in the file.Here are a few steps you can try to troubleshoot and potentially resolve the issue:

Update your system: Ensure that your operating system and all installed packages are up to date. Run the following commands:

sudo apt update sudo apt upgradeCheck for corrupted files: The error message mentions a file encoding issue, which could indicate corrupted files. You can try repairing any corrupted system files using the

dpkgcommand:sudo dpkg --configure -aReinstall the “Settings” app: If updating and repairing doesn’t resolve the issue, you may need to reinstall the “Settings” app. Use the following commands:

sudo apt remove ubuntu-settings sudo apt install ubuntu-settingsCheck for hardware issues: In rare cases, hardware problems can cause file corruption or encoding errors. If you suspect a hardware issue, run diagnostic tests on your system’s components (e.g., RAM, hard drive).

Seek further assistance: If none of the above steps resolve the issue, it would be helpful to seek additional support from the Ubuntu community forums or report the bug to the Ubuntu developers with detailed information about your system configuration and the error message.

Remember to create a backup of important data before attempting any major troubleshooting steps to avoid potential data loss.

You have to start somewhere. ![]()

Where did the logfiles come into it?

In the screen shot, the smaller dialog box is where I filled in the problem description. There is a checkbox below that with “System log” checked.

I had Copilot write the Python script for me and it suggested only using the first 2,000 characters of a log file so the LLM wouldn’t become confused. I suggested the last 2,000 characters might be more useful.

Example log files you might want to include

LOG_FILES = {

“System log”: “/var/log/syslog”,

“Auth log”: “/var/log/auth.log”,

“Kernel log”: “/var/log/kern.log”

}

I missed that.

Telling it which Linux distro may help.

I updated the app to use a web form for input rather than a native Linux form. I also added an option to use either a local LLM or an LLM on HuggingFace. The free account I just created allows a limited number of calls per hour. The results for my test are very similar between the local LLM and HuggingFace. That’s probably due to the models used. They are different but seem to agree.

I could expose this after Christmas if someone wanted to test with me. I’m probably about done for the night. We’re headed out to a family get together on Christmas Eve. Our family tradition.

Merry Christmas to all.

I’m a little late to the party here but I agree with @abhishek forums are for people to people interactions. If you’re going to use AI at least put a disclaimer and dare I suggest you edit it to fit your writing style.

I waste far too much time on lurking on Reddit and anytime I see a wall of text that’s clearly AI generated it turns me right off. Why would I waste my time formulating and typing out a reply if the OP (Original Poster) can’t bothered to put in the effort? It feels like they’re wasting my time just reading it, tbh.

I am suggesting we should put an AI extract in quotes as well as use a disclaimer… the same as you would if you quoted a passage from a book.

I am glad you agree… the forum is for people to people interactions.

I agree with you also. If this or any forum become too impersonal than I believe it would become a huge turn off to most people. People are treated with respect here and the members here are friendly.

This a good combination to encourage questions and have interactions with each other.

Well said Howard only thing I would add to that is personal stories and réflections to add body plus colour to aid understanding

Yes, people like to read stories.

Reflections are hard to do well. They really need to highlight some new approach to a topic.